Satellite-Derived Bathymetry: Mapping the Unseen Seafloor from Space

Mapping the global seabed is essential for understanding our planet and its climate. Yet with roughly 75% of the ocean floor still unmapped, vast areas of our oceans remain largely unexplored.

For everyday life, coastal zones are especially critical. To monitor and anticipate coastal change, we require accurate, large-scale measurements of key parameters such as bathymetry. However, nearly half of the world’s coastal waters remain unsurveyed today.

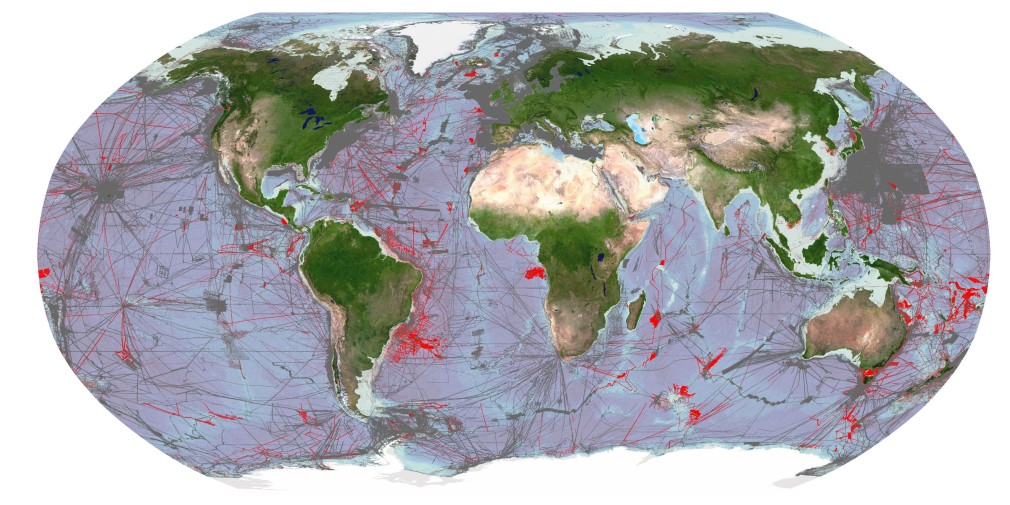

Image showing areas of global seafloor considered to be mapped by General Bathymetric Chart of the Oceans (GEBCO). Grey areas depict coverage of as of 2021, red areas are additions for 2022. Credit: The Nippon Foundation-GEBCO Seabed 2030 Global Center (GDACC) on behalf of Seabed 2030; Source: seabed2030.org

Our vital coastal regions

Shallow coastal waters are home to about 10% of the global population and include many megacities. These low-lying areas are crucial for industrial activities such as maritime navigation, as well as for coastal protection and resource management. At the same time, they are highly dynamic and vulnerable to both natural and human-driven pressures, including erosion, extreme weather, sea-level rise, and demographic shifts. Continuous coastal monitoring is therefore indispensable.

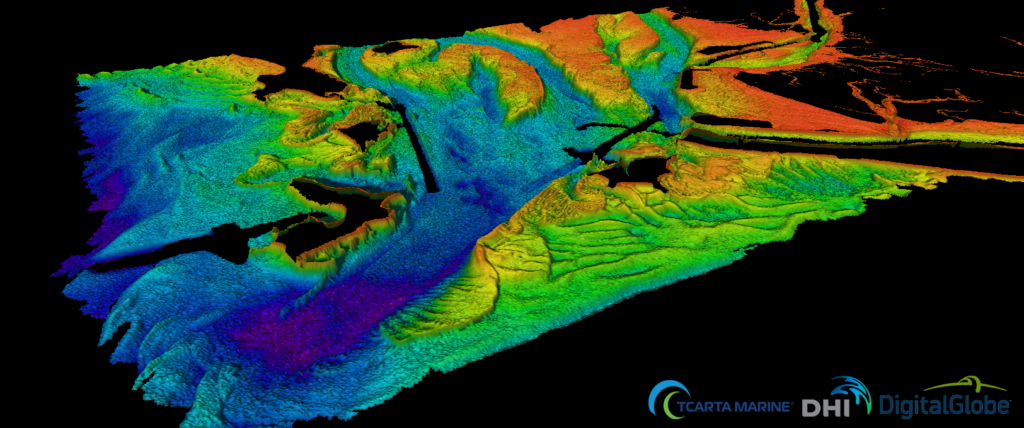

Collecting bathymetric data in shallow waters has long been challenging. Conventional techniques like sonar surveys or airborne LiDAR are expensive, time-intensive, and logistically complex. As a result, data for many coastal regions is either outdated or entirely missing.

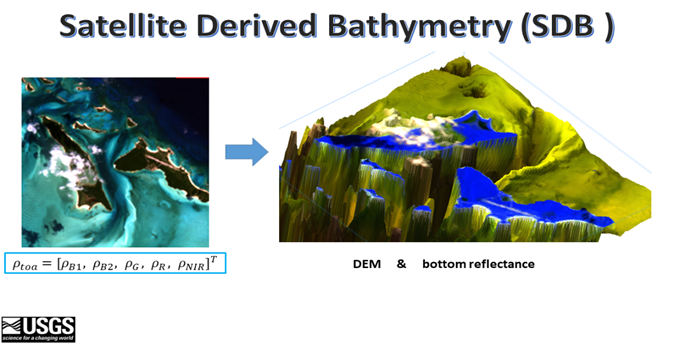

A promising way to close this gap is satellite-derived bathymetry (SDB), which leverages multispectral optical imagery to estimate water depth in nearshore areas.

How does it work?

Satellite data has been used to map the ocean floor for several decades using techniques such as radar altimetry, wave kinematic, and even space-based lasers. Such methods allowed scientists to generate an approximate model of the ocean floor but the resolution of such methods are not enough for shallow waters (down to a depth of 30m).

Satellite-derived bathymetry uses optical image data to try to estimate the depth of the water in a given place. One of the most effective algorithms to do that is called multispectral signal attenuation, which involves analyzing imagery using a combination of spectral bands. As different wavelengths penetrate the water to a greater or lesser degree, the light attenuation can be measured, and elevation ratios are created by analyzing the colour profile and spectral characteristics of coastal areas with known depths. Algorithms are then used to infer water depth from new spectral information by comparing it to known depths of similar areas. With this approach, it is possible to estimate the depth of the water, even down to 30 meters, with a high level of accuracy.

Source: USGS: https://www.usgs.gov/special-topics/coastal-national-elevation-database-%28coned%29-applications-project/science/satellite

The better the resolution and the more spectral bands used in the data, the more accurate the results will be, but even lower resolution data can provide valuable information.

Today, data for SDB is measured from various satellite sources, such as Landsat 8 (NASA), Sentinel-2 (ESA), and Pleiades and Pleiades Neo (Airbus). Pleiades Neo features a new spectral band called deep blue with wavelengths of 400-450 nm, specifically designed for satellite-derived bathymetry and atmospheric corrections. This new spectral band allows for much better interpretation of optical data for shallow waters than previously possible.

What are the benefits of SDB?

SDB is a cost-effective and time-efficient alternative to traditional on-site methods. It doesn’t require ships or human divers, making it a safer method for data collection, and also dramatically reducing the environmental impact caused by more intrusive methods.

Airborne LiDAR bathymetry is another technique to measure the seafloor from the skies. It uses an active sensor which beams a green laser light, as opposed to a passive optical satellite sensor. The use of LiDAR gives higher accuracy than SDB, but at a much higher cost, as it requires dedicated airborne survey and only produces data for a relatively small area. SDB, on the other hand, utilizes existing satellite infrastructure, the images cover a wider area, and data can be captured multiple times a day, meaning it has both repeatability and scalability. It is also time-efficient and can provide near-real time monitoring, making it ideal for regular oceanographic management.

SDB is not without its disadvantages. For instance, it can lack spatial resolution when compared to other methods. Depending on the type of technology used, satellite imagery may be affected by weather conditions, water quality, and the presence of vegetation. It has limited effectiveness in deeper waters. Despite these limitations, SDB is a powerful tool for measuring shallow waters, and can be used very effectively in combination with other methods—above all because the data can be captured with such regularity.

Source: https://business.esa.int/projects/international-satellite-derived-shallow-water-bathymetry-service

Applications of SDB

SDB in coastal regions can play a key role in a variety of practical applications that are essential to our current way of life and preservation of our future.

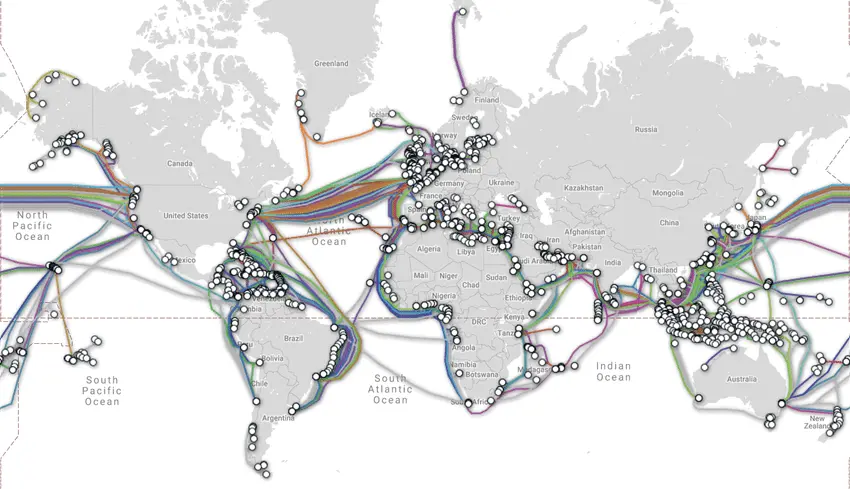

Bathymetry is an important tool for many industries. In terms of trade and logistics, accurate bathymetric data is essential for ships and port authorities to ensure safe navigation. Sea transportation is an essential component in over 90% of international trade, and the ships used to transport these goods are growing ever bigger. Understanding the shape of the ocean floor is a vital input for models of ocean currents and waves, and therefore bathymetry can help to improve sea-state monitoring, including wave prediction and more. For the telecommunications industry, bathymetry is used to plan the routes of subsea fiber-optic cables, avoiding coral reefs, shipwrecks, sensitive areas and geological obstructions. Around 474 of these cables are responsible for transmitting 99% of international data (according to TeleGeography, 2021).

Map of global subsea fiberoptic cables. Source: www.submarinecablemap.com

Energy companies also rely on bathymetry to explore and extract oil and gas, as well as using it to secure new energy resources like wind and ocean energy. It is also used to monitor sedimentary movements in the corridor between the coast and the energy exploitation site to ensure the integrity of cables connecting wind turbines to the shore. These cables are a critical part of the infrastructure for wind energy—which is the fastest growing blue economy sector, as of 2019.

Bathymetry is also used to estimate and control the volume of materials extracted from the seabed and monitor changes caused by dredging activities. Dredging is essential for many industrial and civil engineering applications, but can contribute to coastal erosion and environmental damage if not carefully managed. More broadly, bathymetry is used to better understand coastal erosion caused by both natural and human factors, and can aid in better management of our coasts, including the development of innovative coastal defense systems for risk mitigation and post-crisis analysis.

Accurate and precise knowledge of the seabed is also key in protecting undersea conservation sites and archaeological areas. One way that bathymetry data can help with this is by modelling underwater noise caused by human activity. Furthermore, the technology contributes to food security, helping to identify locations for offshore aquaculture cages, habitat mapping and impact modeling to meet the increasing demand for seafood.

Bathymetry even aids in issues of sovereignty: sovereign waters are defined by the limits of the continental shelf, as dictated by the United Nations Convention on the Law of the Sea (UNCLOS). Bathymetry helps to establish these boundaries and justify the rights of a coastal state to explore (and exploit) its maritime territory.

Satellite-Derived Bathymetry: An essential tool for the future

As climate change intensifies and coastal zones face increasing pressure, monitoring shallow waters is becoming more critical than ever. Satellite-derived bathymetry is emerging as a key instrument for hydrographers and coastal managers as the technology advances.

Its strengths—remote deployment, cost efficiency, safety, scalability, and minimal environmental impact—make SDB a compelling alternative to conventional surveys. Although accuracy and depth limitations remain, ongoing innovation promises substantial improvements.

The rise of affordable small satellites and expanded spectral capabilities—such as Pléiades Neo’s Deep Blue band—are accelerating SDB’s development. Future integration with computer vision and deep learning models is likely to further enhance performance.

With its ability to monitor coastal waters remotely, efficiently, and at scale, SDB is set to become a cornerstone technology for understanding and managing one of our planet’s most vital and vulnerable environments.

Did you like this post? Follow us on our social media channels!

Read more and subscribe to our monthly newsletter!