Quantum Geospatial: Beyond The Limits of Big Data

For years, geospatial systems have followed a simple idea: collect more data and decisions will improve. Cities now use sensors, phones, cameras, and satellites to build digital twins that simulate how urban systems behave. At small scales, this works well. But when we try to model entire cities, the system starts to break down.

The problem is no longer collecting data. It is processing it fast enough to make useful decisions.

Classical computers solve problems step by step. To optimize something like traffic, they evaluate one route, then another, then another. This works for a small area, but as cities grow, the number of possible paths increases extremely fast. Research on large urban systems shows how complexity increases as more components are modeled together. When moving from individual systems to entire cities, the number of interactions between transport, infrastructure, and environmental factors grows rapidly, making real-time analysis significantly harder for classical approaches.

Because of this, most systems rely on shortcuts. These heuristics find good enough answers, but not always the best ones. In traffic systems, this can create cascading problems. A route that looks efficient for one driver may create congestion when thousands follow it. Work on large-scale mobility systems highlights a key difficulty: optimizing routes for many vehicles at the same time without shifting congestion from one part of the network to another.

Another challenge is complexity. City systems depend on many factors at once, including weather, time of day, events, and infrastructure changes. Classical systems struggle to link these variables together. Research on high-dimensional geospatial modeling shows how quickly these interactions become difficult to compute. As the number of variables increases, the system must consider many more combinations at once, which significantly increases computational cost and makes real-time processing challenging.

Even when solutions exist, they are expensive. Large-scale optimization systems require significant computing power and energy. Advances like high-speed route optimization methods improve performance by up to two orders of magnitude, but they do not remove the underlying scaling problem. Similar challenges arise in broader discussions of geospatial big-data systems and route optimization at scale.

A simple way to understand this is to think of a maze. A classical computer tries to solve it by exploring one path at a time. That works when the maze is small. But for a city, there are too many paths to explore.

This article looks at what happens when we try a different approach. It explains how quantum computing changes the way problems are represented, where it may help in geospatial systems such as optimization and pattern detection, and why the future is likely to be a mix of classical and quantum methods rather than a full replacement.

A Different Approach: Quantum Computation

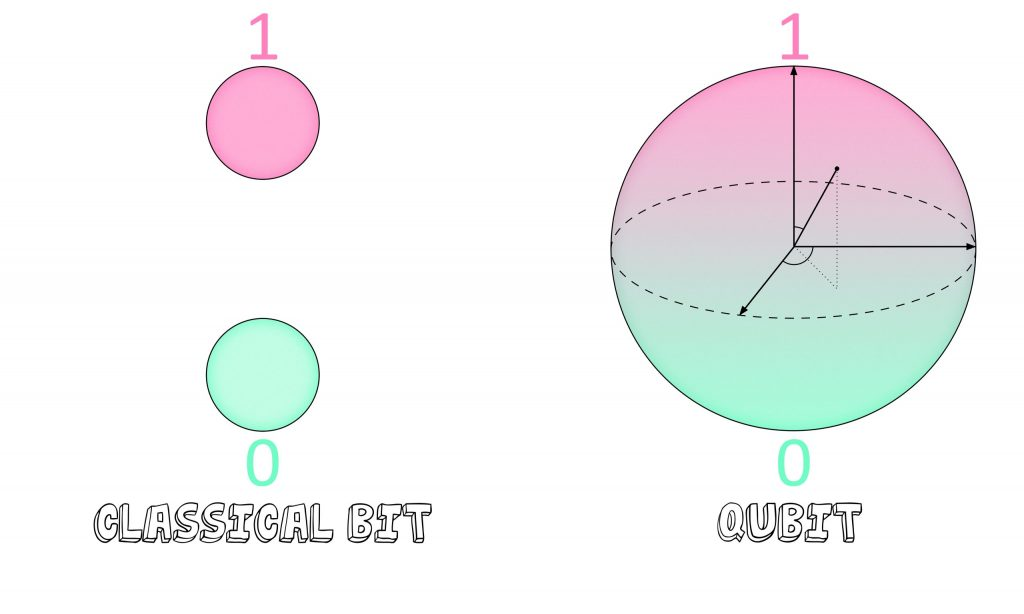

Quantum computing changes how problems are represented rather than just speeding them up. Instead of bits that are either 0 or 1, it uses qubits, which can exist in multiple states at once. This idea, called superposition, allows the system to encode many possible solutions simultaneously rather than checking them one by one. IBM’s overview of quantum computing describes how this shifts computation from step-by-step search to working with probability distributions.

Source: Fermilab

This matters for geospatial problems because many of them involve exploring large solution spaces. Instead of evaluating each route or configuration separately, quantum systems reshape the search so that better solutions become more likely. In simple terms, it is less about trying every path and more about guiding the system toward good ones.

Two additional properties make this possible. Entanglement links variables together so changes in one part of a system affect another, which is useful for modeling connected urban systems. Interference helps amplify better solutions while reducing weaker ones. Comparisons of quantum vs classical computation explain how this differs from traditional trial-and-error approaches.

These ideas are already being explored in real scenarios. Experiments show how quantum methods can be applied to routing problems, though still at a limited scale.

Quantum systems are still early and technically challenging. They require specialized hardware and are often accessed through cloud platforms rather than owned directly. But they introduce a different way of thinking about computation, one that is especially relevant for problems where the number of possibilities becomes too large for classical systems to handle efficiently.

Optimization at Scale

Optimization means finding the best way to do something, like routing vehicles through a city. This becomes difficult when thousands of vehicles are involved, because the number of possible routes grows very quickly.

Early experiments show how quantum methods can help. Work like Volkswagen’s traffic optimization project explored routing for large fleets, where each vehicle is assigned a different path instead of sending everyone through the same “fast” route. This helps reduce congestion rather than shifting it. Volkswagen’s Lisbon quantum shuttle experiment, a real-world trial, demonstrated this idea at smaller scale, where buses were dynamically routed based on city-wide traffic conditions.

Similar ideas are being explored in logistics. Studies on quantum approaches for logistics optimization and industry work like DHL’s technology-driven delivery systems show how routing, fuel use, and delivery efficiency can be improved by better optimization methods.

These systems are still early, but the direction is clear. Instead of optimizing one vehicle at a time, the system treats the entire city or network as a connected problem and finds more balanced solutions.

High-Dimensional Data and Pattern Detection

Modern satellites capture far more than standard images. Hyperspectral data includes hundreds of spectral bands, forming what are often called data cubes. These allow detection of subtle patterns such as vegetation health or water quality. Research on quantum machine learning for Earth observation data highlights how complex these datasets can become. Hyperspectral imagery can contain hundreds of spectral bands per pixel, creating high-dimensional data where patterns depend on correlations across many layers rather than a single image.

The challenge is scale. Processing these high-dimensional datasets is computationally expensive, and classical systems often struggle to extract patterns efficiently. Work on quantum models for satellite imagery analysis explores how quantum approaches may handle these feature spaces differently.

One advantage is pattern detection. Instead of relying on large labeled datasets, some quantum approaches aim to identify structures with fewer examples. Quantum methods for hyperspectral analysis suggest that subtle signals, which may be missed by classical models, can be captured more effectively.

These methods are still experimental, but they point toward faster analysis of large geospatial datasets and improved detection of small or complex changes, especially in environmental monitoring scenarios.

The Current Reality: Hybrid Systems

Quantum computing is still in an early stage. Today’s systems, often described as noisy intermediate-scale quantum (NISQ) devices, are sensitive and not fully reliable on their own.

Because of this, most real use cases follow a hybrid approach. Classical systems handle data processing, storage, and user interaction, while only the hardest optimization tasks are sent to quantum hardware. For instance, the AWS Braket platform shows how both systems can work together rather than replacing each other.

This setup allows gradual adoption. PennyLane, the tool for hybrid quantum models, and frameworks such as Qiskit let developers experiment with quantum methods alongside classical systems. It also lowers the barrier to entry. Cloud-based access means organizations can test quantum approaches without building their own hardware.

In practice, quantum computing is not replacing geospatial systems. It is becoming a supporting layer for problems that are difficult for classical methods alone.

Developing Practical Intuition

Quantum computing is not needed for every problem. For small tasks, classical systems are still faster, cheaper, and more reliable.

It becomes relevant when there is a clear computational bottleneck. This usually appears in problems with large search spaces, high-dimensional data, or strict time constraints. Discussions on quantum advantage in complex systems highlight where classical methods begin to struggle.

Typical signals include:

- very large optimization problems (e.g., routing at city scale)

- datasets with many layers (e.g., hyperspectral imager)

- situations where approximate solutions are not sufficient

- strong interactions between many variables

In these cases, hybrid or quantum-inspired methods may offer an advantage. Insights from advanced quantum-enabled geospatial analysis show that these approaches are explored through hybrid workflows, focusing on optimization and high-dimensional problems. The key takeaway is that quantum methods are being used selectively, not as full replacements for classical systems.

A practical starting point is not full quantum adoption, but preparation. This includes structuring data, identifying bottlenecks, and experimenting with hybrid methods so integration becomes easier as the technology matures.

Geospatial systems are moving beyond collecting data toward understanding complex systems in real time. Classical methods took us far, but they struggle as scale and complexity increase.

Quantum approaches introduce a different way to handle these challenges. Not by replacing existing systems, but by extending what is possible in optimization and high-dimensional analysis.

The change is happening slowly, but it’s important. It includes geospatial analysis like routing through cities and remote sensing detection of changes in the environment. The goal is not just to compute faster, but to make better decisions.

The future of geospatial intelligence will not be defined by a single technology. It will be shaped by how classical and quantum systems work together to understand and manage the world more effectively.

Did you like this article? Read more and subscribe to our monthly newsletter!