The Earth observation (EO) industry is navigating a mid-life crisis of utility. We have more eyes in the sky than ever, yet a persistent gap remains between the data we produce and how it is used in real-world decisions.

The disconnect is structural. Data is still priced and delivered under assumptions of scarcity that no longer reflect current reality. For decades, charging by the square kilometer made sense in a supply-constrained market. That logic is now breaking down.

The shift is already visible in the numbers. The EO sector reached $5.4 billion in 2024, with value-added services growing at 7-8% annually. Growth is shifting downstream, while standalone imagery is becoming less aligned with how the market creates value.

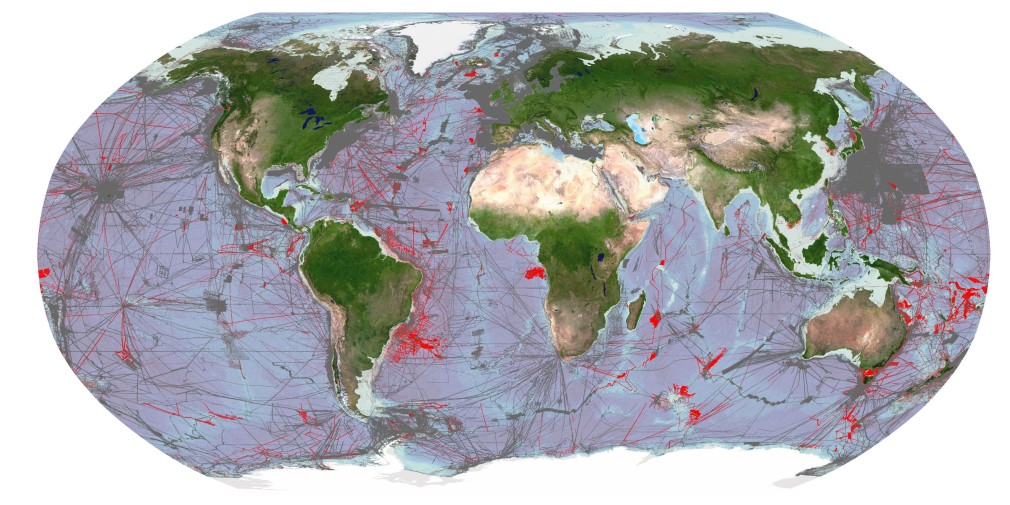

At the same time, the upside remains significant. Industry estimates suggest the total addressable market could nearly double by 2034. But that growth will not come from more satellites alone. It depends on moving beyond raw imagery to interoperable data streams that fit into operational workflows.

This is already reshaping competition. As medium- and high-resolution imagery becomes more widely available, differentiation based purely on spatial resolution or revisit frequency is narrowing. Value is shifting away from the image itself and toward the reliability of the information derived from it.

The Hidden Cost of Inconsistency

The legacy “tasking” model, where a customer pays for a specific capture of a specific coordinate, was designed for reconnaissance. It does not translate well to commercial use. Monitoring supply chains or carbon sequestration at scale requires consistent data over time. A single image is an observation, an anecdote, not a usable signal.

The problem is that imagery is inherently variable. It is shaped by sensor bias, orbital geometry, and atmospheric conditions. When the industry sells images, it transfers the burden of normalization to the customer. At an operational scale, it becomes a constraint.

Variations from atmosphere, illumination, and viewing geometry routinely reach several percentage points in radiometric difference—often rivaling the underlying signal in applications like vegetation monitoring or soil moisture estimation.

The idea of a “geospatial tax”, as described by Marc Prioleau, Executive Director of the Overture Maps Foundation, captures this hidden cost. In many enterprise workflows, preparing data for analysis now rivals or exceeds acquisition costs. When analysts spend 80% of their time on cleaning data, the result is an expensive image rather than decision-ready intelligence.

This friction helps explain why EO has struggled to scale commercially, despite rapid growth in data availability. It also exposes the limits of the legacy “price per square kilometer” model, which assumes that data is a fungible commodity. As multi-sensor datasets are combined and time-series extend, inconsistencies amplify rather than resolve, creating a compounding barrier to entry for non-specialized industries.

Analytics is a Symptom, Not the Solution

As any imagery analyst can attest, two images of the same location are not directly comparable without correction. As time-series grow and datasets from multiple sensors are combined, these inconsistencies build rather than disappear. More data does not solve the problem; it makes it harder to manage.

This matters because the fastest growth in the EO market is happening in analytics and decision-support systems. In sectors such as agriculture, insurance, and energy, imagery is used to feed automated workflows. The requirement is to track change reliably across time.

The industry often presents “analytics as a service” as a move up the value chain. In practice, it is a workaround. Users are not simply outsourcing interpretation, but the burden of making inconsistent data usable.

What appears to be an analytics problem is, in most cases, a data quality problem.

This is why frameworks such as CEOS Analysis Ready Data (ARD) have gained traction. Without radiometric consistency, geometric alignment, and traceability, scaling analytics becomes difficult. Artificial intelligence makes this constraint clearer. Machine learning models depend on stable inputs; when data varies, outputs drift or degrade.

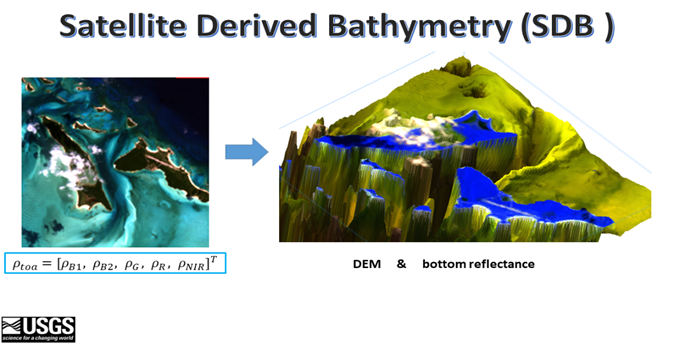

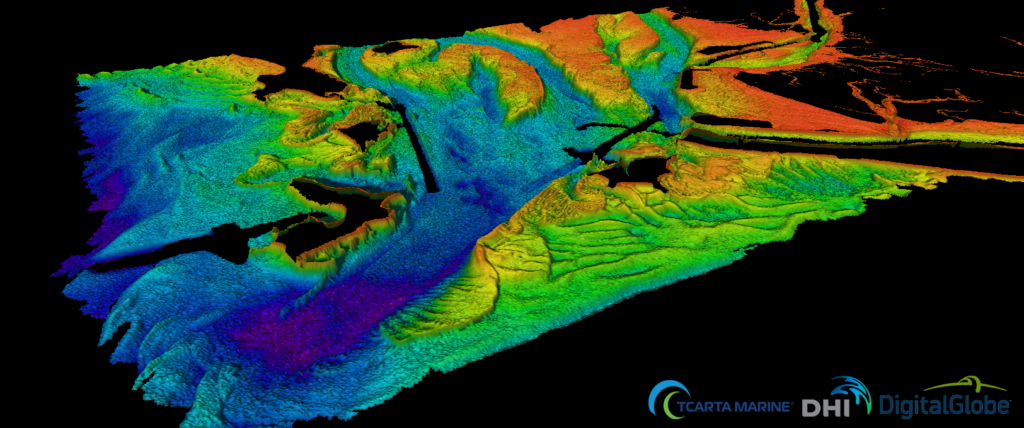

From Imagery to Measurement

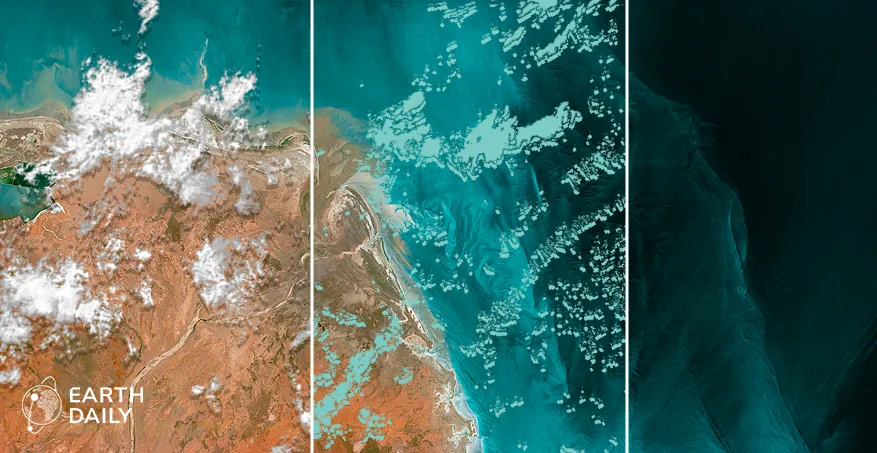

The shift underway is from imagery to measurement. Imagery captures how the Earth looks; measurement captures how it changes, and by how much. The distinction matters because measurement requires consistency across time, sensors, and conditions. Delivering that consistency demands system design that prioritizes calibration, signal-to-noise performance, and spectral depth over visual quality.

This shift is also reshaping where value sits in the market. As satellite capacity has expanded, standard optical imagery in the visible spectrum has come under increasing price pressure. What remains differentiated is not simply resolution, but the ability to extract physically meaningful signals.

That is where the “invisible” spectrum becomes critical. Shortwave Infrared (SWIR) and Thermal Infrared (TIR) bands provide direct insight into moisture stress, material properties, and heat signatures that RGB imagery cannot capture.

But accessing these signals requires a level of radiometric precision, calibration stability, and system-level consistency that is difficult to achieve at scale. This creates a meaningful barrier to entry and reinforces the shift toward systems built to produce stable, comparable measurements over time.

This transition is already underway. New orbital architectures are being designed not as imaging platforms, but as measurement systems, built to deliver consistent, analysis-ready data streams for AI-driven workflows and large-scale operations.

Trading Pixels for Proven Signals

As Earth observation becomes embedded in decision-making, its value is no longer defined by how much data is delivered, but how reliably it supports high-consequence outcomes. A $100-million decision does not depend on access to imagery; it depends on the integrity and consistency of the signal behind it.

Revenue models are evolving accordingly, with one-off image sales giving way to subscriptions, APIs, and analytics because imagery no longer functions as the endpoint in operational workflows.

The industry has largely solved for access, but it has not yet solved for trust. Producing consistent, comparable measurements at scale requires calibration, stability, and system-level coordination that are not reflected in legacy pricing models or in systems optimized for collection alone.

The image is no longer the product. The signal is.

Earth observation will transition from a niche data source to an indispensable utility only when measurement, not imagery, defines both the product and the price. Until then, increasing data volumes will continue to outpace the market’s ability to use them reliably.