GeoAI’s roots go back to early work on AI for geographical problem solving in the 1980s. The field was later formally introduced in 2018, as AI, geospatial big data, and high-performance computing began to converge. Since then, GeoAI has depended heavily on the cloud. Large models were trained and deployed in data centers because they needed serious compute, memory, and power. This worked well when speed was not the main concern.

But field operations are different.

A drone detecting a wildfire cannot wait for every image to travel to a distant server. A self-driving tractor in a rural zone cannot pause until the cloud responds. A rescue team working after an earthquake cannot depend on cell towers that may already be damaged – This is the cloud latency wall. The model may be intelligent, but the connection becomes the weak point.

Modern sensors can collect data faster than field networks can send it to the cloud. In disaster response, drone teams can produce hundreds of gigabytes of imagery (even 350 GB per emergency!) that must reach decision-makers quickly. In autonomous farming, John Deere’s autonomous tractor uses onboard cameras and neural networks to classify each pixel in about 100 milliseconds, helping the machine decide whether to keep moving or stop.

This is why GeoAI is moving closer to the sensor. Instead of sending all data to the model, the model is moving to the data. Smaller AI systems now run directly on drones, tractors, smartphones, satellites, cameras, and handheld GIS devices.

This article looks at the rise of on-device GeoAI. It explains how models are being compressed, how small language models can support spatial queries, how new mobile chips are making local inference faster, and why privacy, sovereignty, and reliability are pushing geospatial intelligence away from cloud-only systems.

How to Fit the Model: Quantization and Pruning

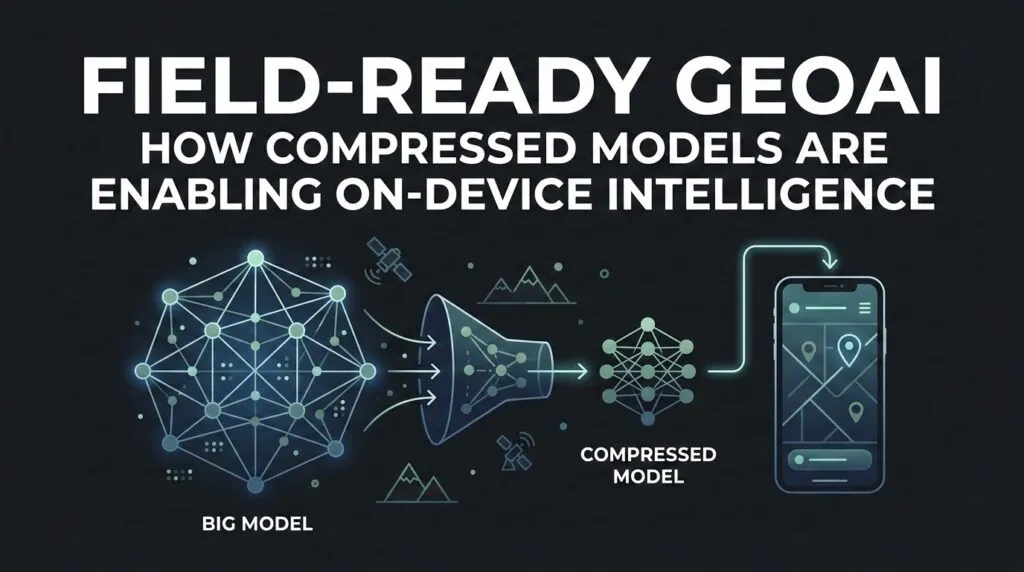

To run GeoAI on a drone, phone, tractor, or field sensor, developers first have to shrink the model.

Large AI models are made of numerical weights. In many full-size systems, these weights are stored in FP32, a 32-bit format that gives high precision but also uses more memory and power. That is fine in a data center. It is harder on a small device with limited battery life.

Quantization solves part of this problem. It reduces the precision of the model’s numbers from FP32 to INT8, 4-bit, or other smaller formats. It is like rounding a long decimal into a shorter one. The model loses a little detail, but it becomes much lighter and faster.

For GeoAI, the goal is not just compression. The model still has to detect buildings, roads, ships, crop stress, or fire lines correctly. A study on mixed-precision quantization for SAR ship detection showed that low-bit quantization can greatly reduce model size while keeping the accuracy loss small.

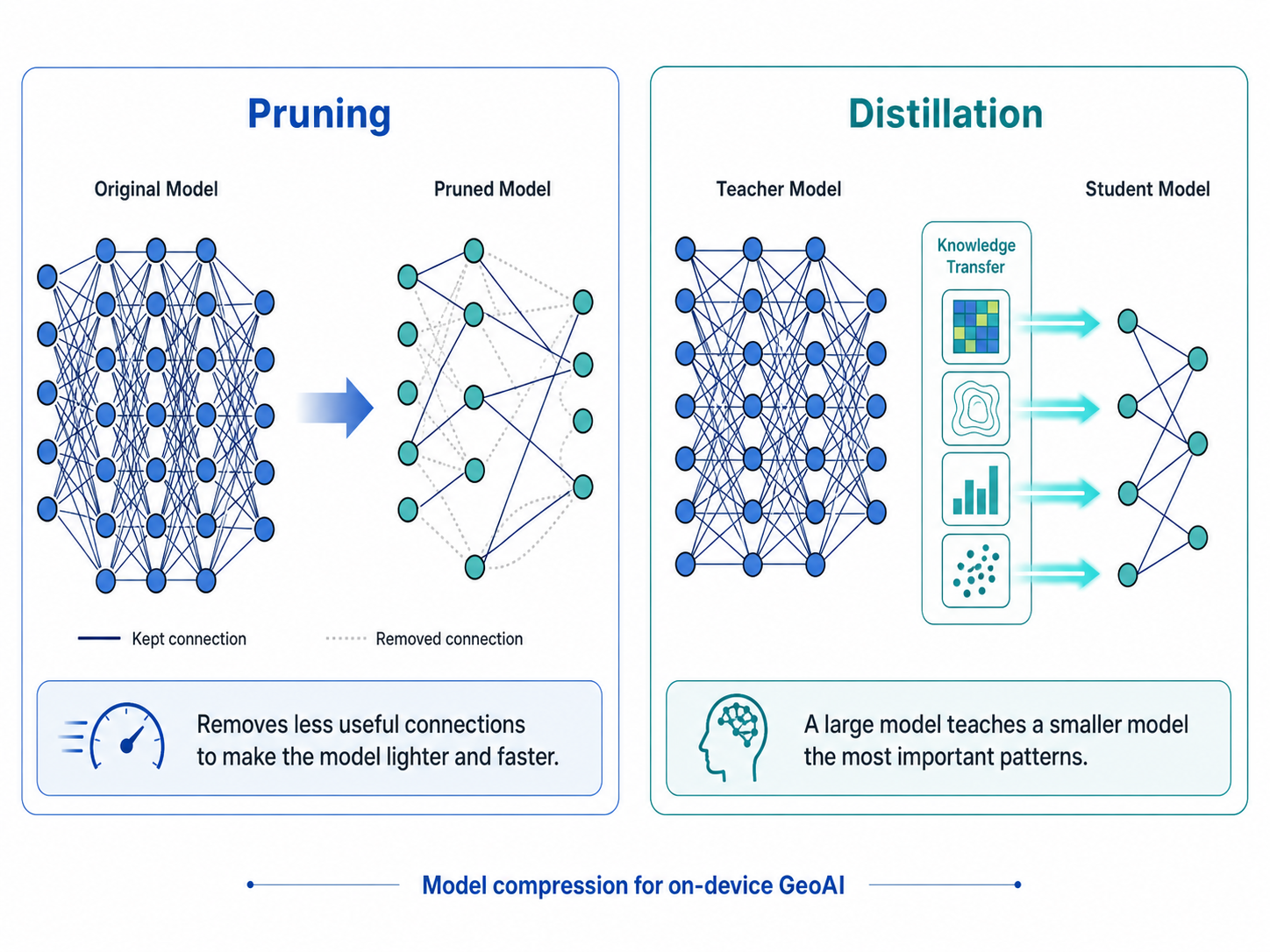

Pruning works differently. It removes parts of the model that do not add much value. Distillation is another method, where a large “teacher” model trains a smaller “student” model to keep the most useful patterns.

Source: AI Imagery

But spatial models are harder to shrink than text models. A text model can survive a slightly awkward sentence. A GeoAI model may not survive a small location error. A few meters can decide whether a building is inside a flood zone or outside it. This is why methods like AlignQ matter. They focus on reducing quantization error while preserving useful relationships in the data.

For on-device GeoAI, shrinking is not just optimization. It is what allows spatial intelligence to leave the cloud and work where the decision happens.

Small Language Models for Spatial Querying

The way people use maps is also changing. Instead of clicking through complex GIS menus, a field worker may soon ask a device a simple question: “Show me all drainage pipes within 5 meters that are likely blocked.”

Unlike very large cloud models, SLMs are designed to run with fewer parameters and lower compute needs. Microsoft’s Phi-3-mini, for example, was introduced as a 3.8 billion parameter model small enough for practical local deployment. Google’s Gemma models are also designed for applications that can run across laptops, phones, and cloud environments.

For GeoAI, this means the map can become more conversational. A worker could ask about nearby assets, blocked drains, unsafe routes, or parcels inside a flood zone. The device would not need to send every query to a remote server. It could interpret the question locally, check local map layers, and return an answer in the field.

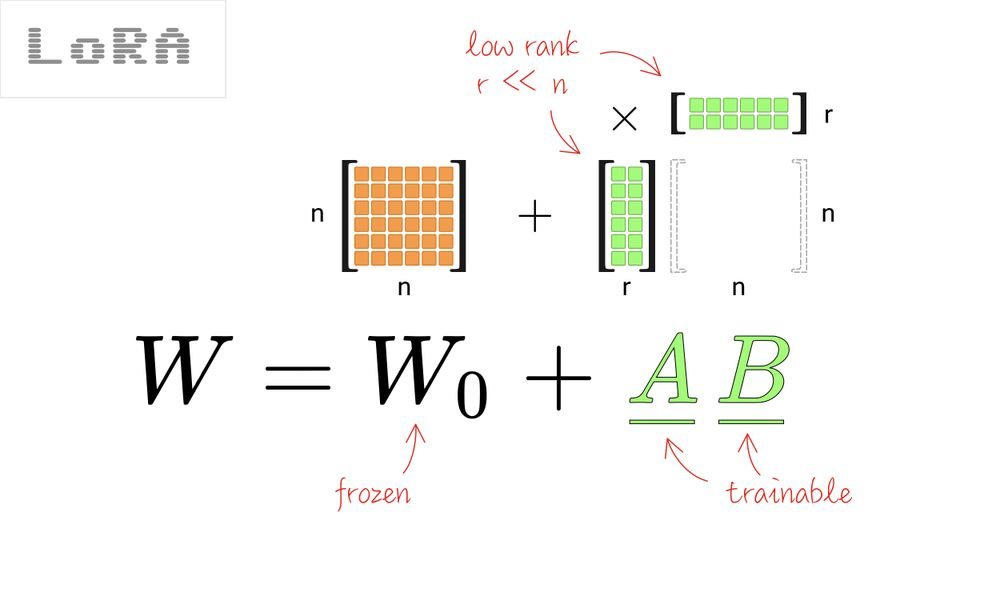

The key technique here is fine-tuning. LoRA, or Low-Rank Adaptation, allows developers to adapt a model for a specific task without retraining every parameter. For GIS, this could mean tuning a small model on local road names, coordinate systems, asset layers, and spatial rules.

Source: Towards Data Science

This matters because geography is local. A general model may understand the idea of a road, but a useful field model needs to understand this road, this drainage network, and this emergency route.

In this sense, the future map is not only something we view. It is something we ask.

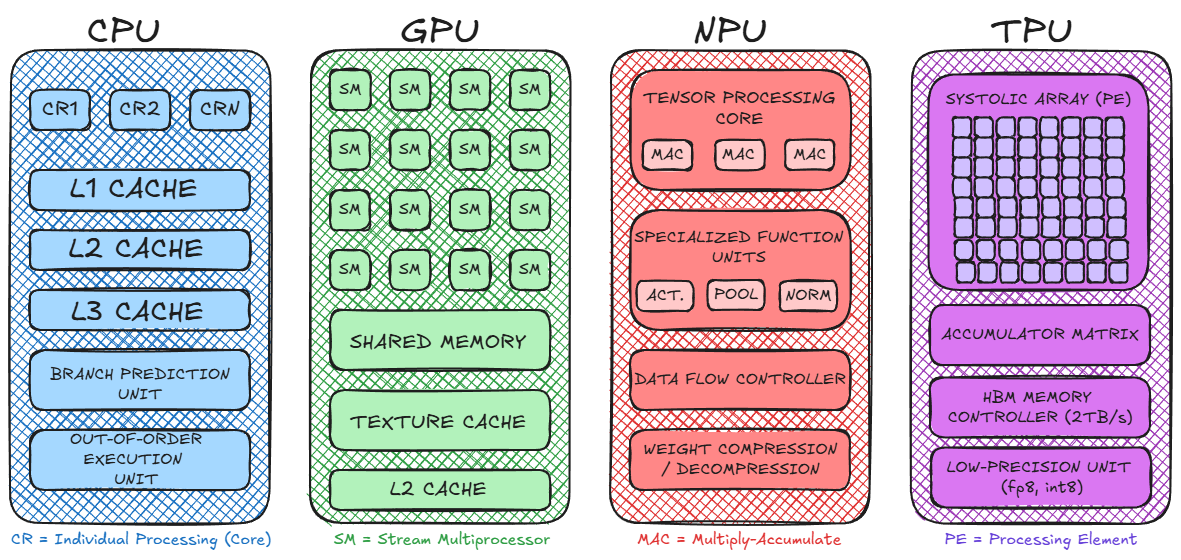

Hardware-Aware GeoAI: NPUs and Mobile Silicon

Shrinking the model is only half the story. The device also needs the right hardware to run it. This is where NPUs (Neural Processing Units) become important. Unlike CPUs, which handle general tasks, NPUs are built to run AI models efficiently. They allow phones, drones, and edge computers to process images, language, and sensor data with lower power use.

Source: Koshiq Hossain

For GeoAI, this matters because many tasks are visual. A drone may need to detect a damaged roof. A phone may need to recognize a blocked road. A field camera may need to identify a change in land cover. These tasks are too slow if every frame has to travel to the cloud.

Developers are now using tools such as the Qualcomm AI Stack and Apple Core ML to optimize models for the chips inside real devices. The goal is not only accuracy. It is also speed, battery life, and heat control.

Drone hardware is moving in the same direction. DJI’s Manifold 3 offers up to 100 TOPS of AI compute in a small onboard unit weighing about 120 grams. That kind of edge computing allows drones to analyze imagery while they fly, instead of waiting for cloud processing.

This also connects to GPS-denied mapping. Niantic Spatial describes visual positioning systems that use sensors and computer vision to support positioning when GPS is weak or unavailable.

In on-device GeoAI, hardware is no longer just support infrastructure. It decides what the model can actually do in the field.

Privacy, Sovereignty, and “Zero-Cloud” GIS

Speed is not the only reason GeoAI is moving to the device. Privacy and sovereignty matter too.

Geospatial data is often sensitive. A drone image may show a military site. A utility map may reveal the location of power lines, water pipes, or telecom assets. A disaster response map may include the movement of emergency teams. Sending that data to the cloud can create legal, security, and political risks.

This is why many organizations are looking at “zero-cloud” GIS. The idea is simple: process the data where it is collected, and avoid sending sensitive information to a remote server unless it is truly needed. The push is already visible in the wider AI market. IDC predicts that by 2028, CIOs will increase investment in sovereign-ready cloud and data localization environments by 65%. The same logic applies to GeoAI, where location data can be more sensitive than ordinary business data.

Defense and public safety are clear examples. SIPRI’s work on the military AI industry shows how AI is becoming part of national security systems, where control over data and infrastructure is critical.

On-device GeoAI also helps when networks fail. Edge AI systems can support real-time intelligence without cloud connectivity, which matters for first responders, defense teams, and field crews working in remote or damaged environments.

In this context, small models are not just efficient. They give organizations more control over their maps, their sensors, and their decisions.

The rise of on-device GeoAI changes the way we think about geospatial intelligence.

For years, the industry imagined one large model in the cloud that could understand everything. But the future may look different. Instead of one central “god model,” we may see millions of smaller geospatial agents working inside phones, drones, sensors, vehicles, and satellites.

The evidence is piling up. . Esri is bringing GeoAI and foundation models into geospatial workflows. DJI is encouraging developers to build onboard AI applications for drones. Niantic Spatial is working on visual positioning systems that help machines understand location when GPS signal is weak or unavailable.

For GIS developers, this changes the skill set. Training a model is no longer enough. The next challenge is making that model small, fast, private, and reliable enough to work where the decision happens.

The most useful GeoAI will not always be the largest model. It will be the model that can run in the field, understand the local context, and act at the right moment.

Did you like this post? Follow us on our social media channels!

Read more and subscribe to our monthly newsletter!