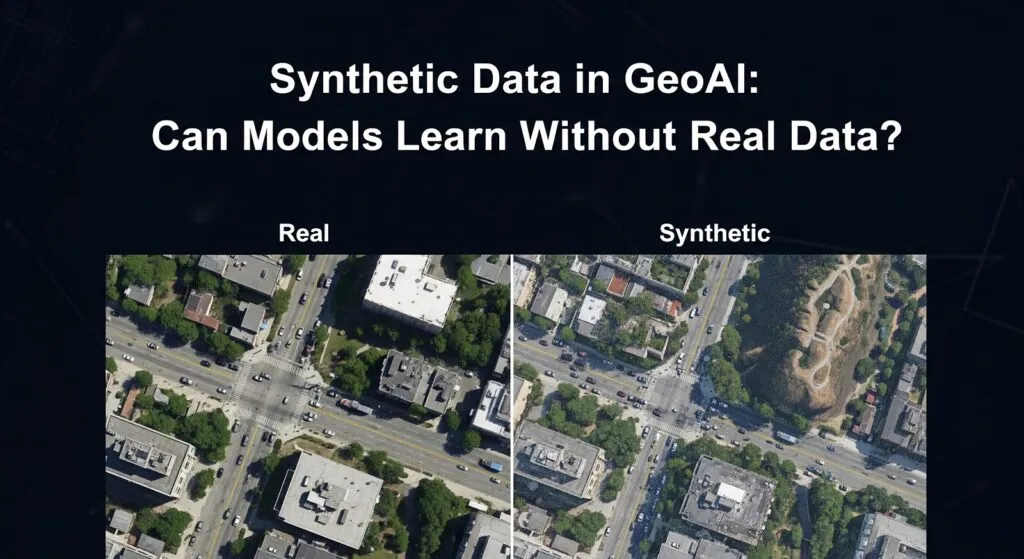

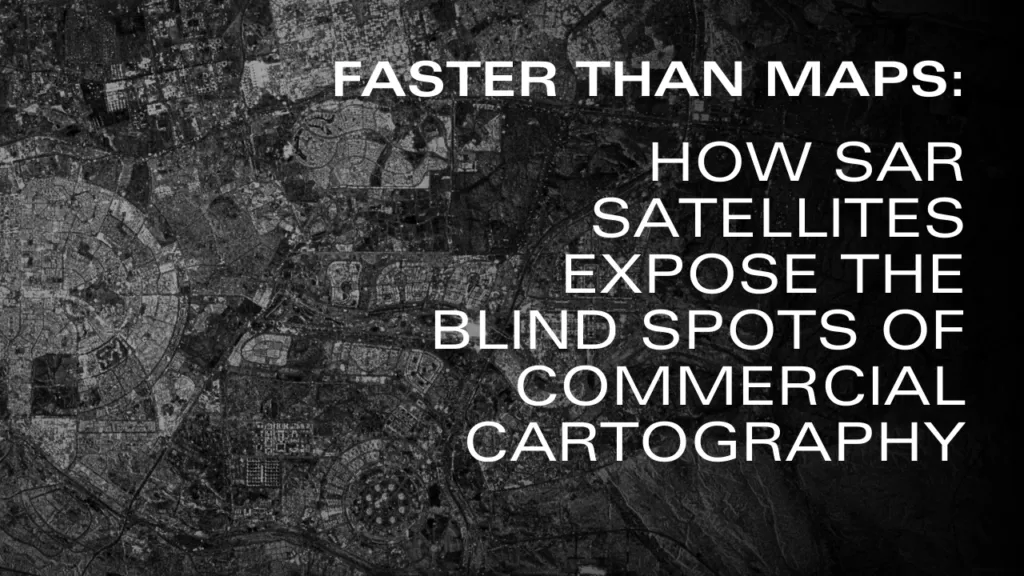

Faster than Maps: How SAR Satellites Expose the Blind Spots of Commercial Cartography

Why commercial maps are falling behind global urbanization, and how multi-modal AI pipelines are the real solution?

If you drop a pin at coordinates 29.96, 31.73 on a standard commercial web map in 2026, you will find a fragmented picture. You might see a skeletal network of roads and scattered clusters of building polygons, but they are surrounded by vast, unexplained gaps of empty beige space. However, switch your view to a recent satellite image, and the missing puzzle pieces immediately appear: a highly dense, fully constructed urban fabric—complete with paved streets and residential blocks—filling every one of those gaps.

Figure 1: The Cartographic Lag in action (March 2026). Top: Commercial vector maps show an empty desert. Bottom: Sentinel-2 optical imagery reveals the true urban density in the exact same location

This is part of Egypt’s New Administrative Capital. Announced in 2015 with construction beginning in 2016, this megaproject has been growing in the desert for a decade. Yet, what we are witnessing on our screens is the ‘Cartographic Lag‘—a growing phenomenon where concrete is poured significantly faster than human mapmakers, traditional algorithms or new AI methods can update the vectors. When commercial cartography leaves entire neighborhoods invisible, how do we monitor the world’s rapid urban expansion? The answer doesn’t lie in drawing better maps, but in teaching machines to read the planet’s physical structure.

Why does this happen? Traditional map-making, even when assisted by modern Artificial Intelligence, relies heavily on optical satellite imagery. To update a vector map, an algorithm or a human contributor needs a perfectly clear, cloud-free photo to trace the outline of a new building and convert it into a neat polygon. However, commercial high-resolution satellites only revisit the same location occasionally, and the sheer scale of modern megaprojects quickly overwhelms this step-by-step approach. The result is a fragmented spatial database where the map becomes obsolete the moment the concrete dries—a problem that disproportionately affects the Global South—from the megaprojects of the Middle East to the rapidly expanding informal settlements of Latin America—, where rapid urbanization outpaces the commercial priorities of tech giants. Relying solely on optical extraction means we are always looking at the past.

To bypass this cartographic bottleneck, we must stop looking at colors and start measuring geometry. This is where Synthetic Aperture Radar (SAR) from satellites like Copernicus Sentinel-1 becomes a game-changer. Unlike optical cameras, radar sensors don’t care about clouds, weather, or daylight; they emit microwaves that bounce back strongly when hitting solid, human-made structures like steel and concrete.

Figure 2: Real-time physical detection. Top: Raw Sentinel-1 SAR imagery capturing structural backscatter. Bottom: Simple thresholding (> -12 dB) isolated in red, instantly capturing human-made structures regardless of cloud cover or daylight but with many false positives.

Is this raw radar extraction a perfect, ready-to-use map? No. As seen in the red overlay, simple thresholding can pick up false positives like construction cranes, temporary scaffolding, or rocky terrain.

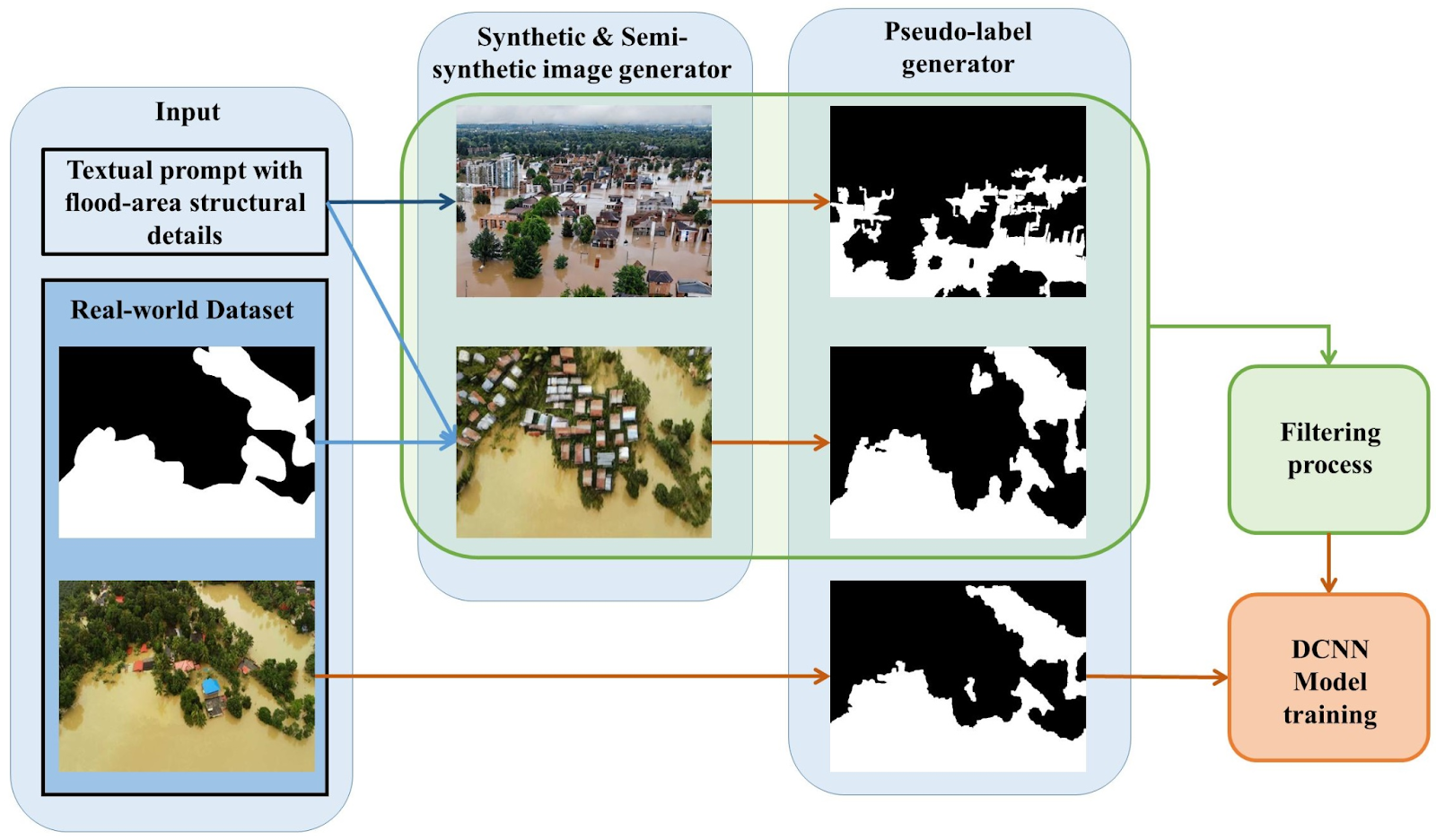

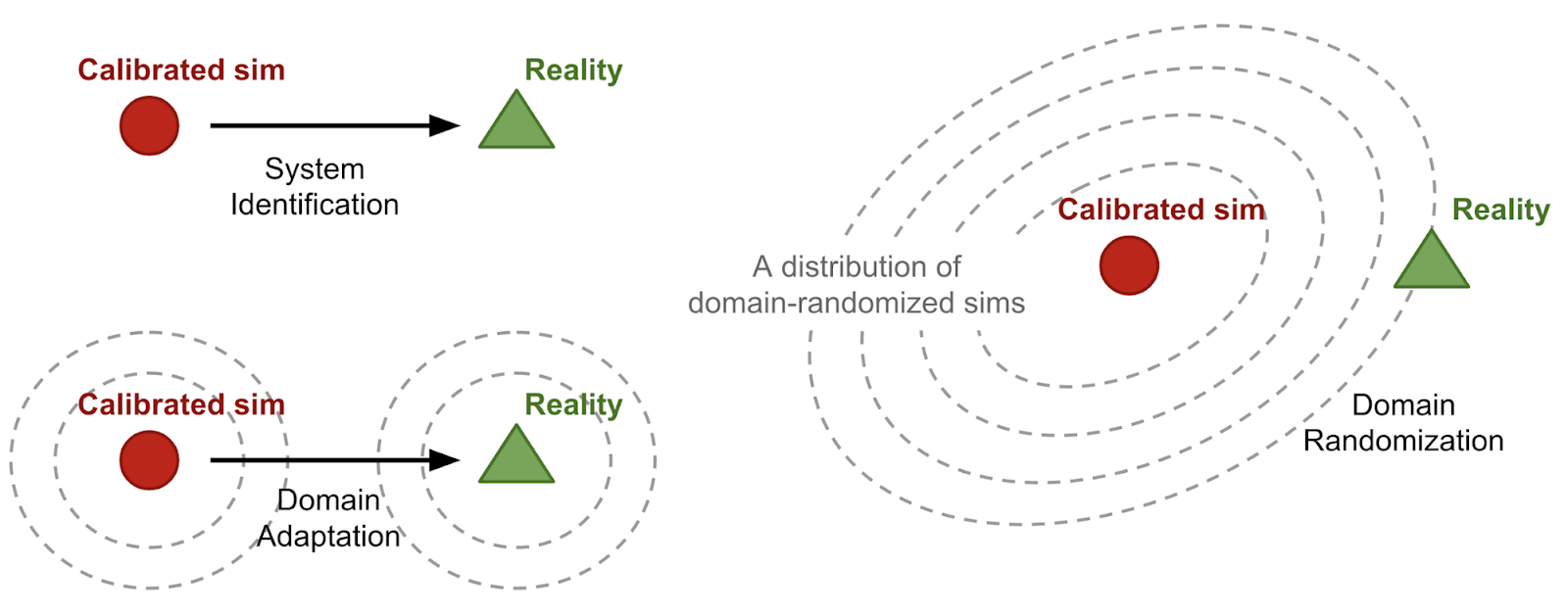

One might ask: why not just train a classic Machine Learning model to clean this up by fusing well-mapped cities and applying it here? While transfer learning is possible, classical models typically suffer from severe domain shift. A model trained on the consolidated urban fabric of existing cities will struggle to generalize to the unique spectral and radar signatures of a half-built megacity in the desert. Correcting this shift classically would require an exponential, manual effort to create new labeled data (polygons) for every new environment—reinventing the very human bottleneck we are trying to avoid. Achieving true global generalization requires self-supervised learning on petabytes of raw, unlabeled data.

This is exactly why the industry has shifted toward massive Foundation Models. So, why not simply hand the task over to advanced GeoAI like Google DeepMind’s AlphaEarth?

Figure 3: AlphaEarth 2025 multi-dimensional embedding (Bands A01, A16, A09). The GeoAI perfectly captures the consolidated urban context but misses the newest residential blocks visible in 2026, highlighting the temporal lag of annual foundation models.

If you look closely at the AlphaEarth visualization, you will notice a fascinating limitation. While the AI brilliantly captures the consolidated urban footprint in its multidimensional embedding, it completely misses the newly built grey residential blocks visible in the 2026 optical image. Why? Because computing 64-dimensional embeddings for the entire planet requires ingesting petabytes of multi-modal data, forcing these massive models to be published as annual composites. AlphaEarth is currently showing us its “memory” of 2025. It possesses deep contextual intelligence, but it operates with its own temporal lag.

The solution to the Cartographic Lag is not to choose between fast radar or smart AI, but to build a continuous, hybrid monitoring pipeline. We must use the high-frequency, cloud-penetrating SAR data as a real-time ‘tripwire’. When the radar detects fresh concrete today (2026) in an area where the latest GeoAI baseline (2025) sees only desert, it flags an immediate discrepancy. The system can then clean the noise, cross-reference it with the AI’s contextual knowledge, and instantly output accurate urban vectors. To keep up with the megacities of tomorrow, we must transition from manually drawing static maps to continuously computing living infrastructure.

Try it yourself: Visualizing the Cartographic Lag

This article proposes a theoretical hybrid pipeline to solve the Cartographic Lag. To help you visualize the exact problem we are trying to solve, I have written a Google Earth Engine script that overlays the real-time physical backscatter of Sentinel-1 (2026) with DeepMind’s AlphaEarth embeddings (2025).

Read the code: You can review the exact data extraction logic in this GitHub Gist: https://gist.github.com/rinvictor/93806c694118daf348556c4ee134e75a

Run the interactive map: You don’t need to set up a local environment or write any code to see this in action. Click the link below to open my public GEE workspace and explore the Cartographic Lag in real-time in our sample zone: https://code.earthengine.google.com/06b7710445999e0dbf6a3f961a215aef

Did you like this post? Read more and subscribe to our monthly newsletter!